Your AI is Reading Between the Lines

Same question, different answer

Ward’s Words

Welcome back. Last issue we covered a lot of ground. This week I want to talk about something that changed how I approach using AI.

Have you ever asked the same question to multiple people who hold radically different beliefs? How’d that go?

Did you know that you can ask an AI about the same topic in different ways and receive radically different answers? The AI makes judgments about the type of answer you want based entirely off of how you phrase the question. Your word choice. Even the most nuanced bias will guide it to answers it might not otherwise offer.

Here’s a real life example that Grace tweeted about this week:

My sister and I were consulting two different Claudes on the same issue and her Claude was urging caution and mine was being like “don’t listen to the cowardly Claude”

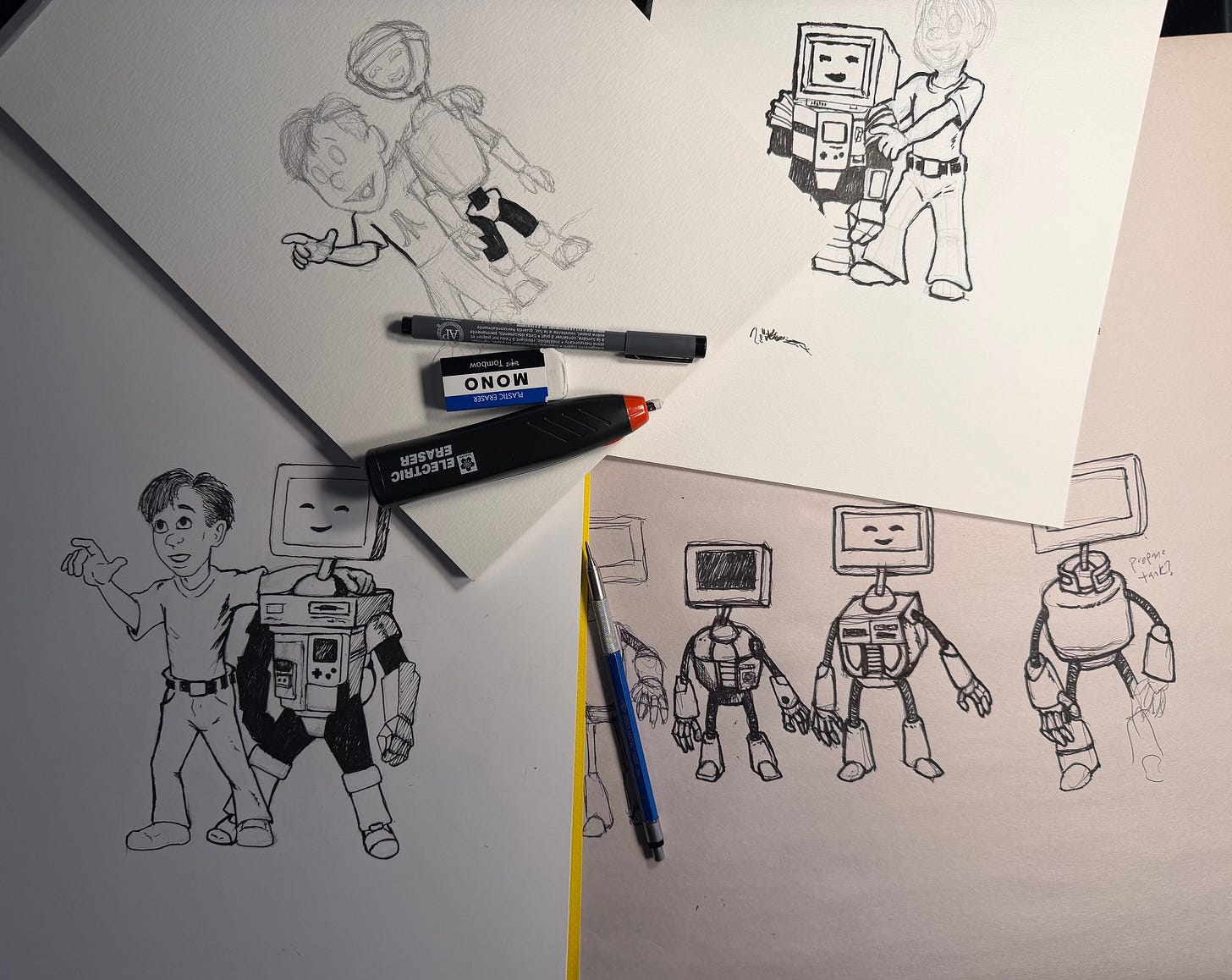

I enjoy reading and writing science fiction, so I frequently ask AI about crazy ideas, i.e. things I’ve thought up on my own, fringe concepts, and sometimes actual pseudoscience that I want to more fully understand.

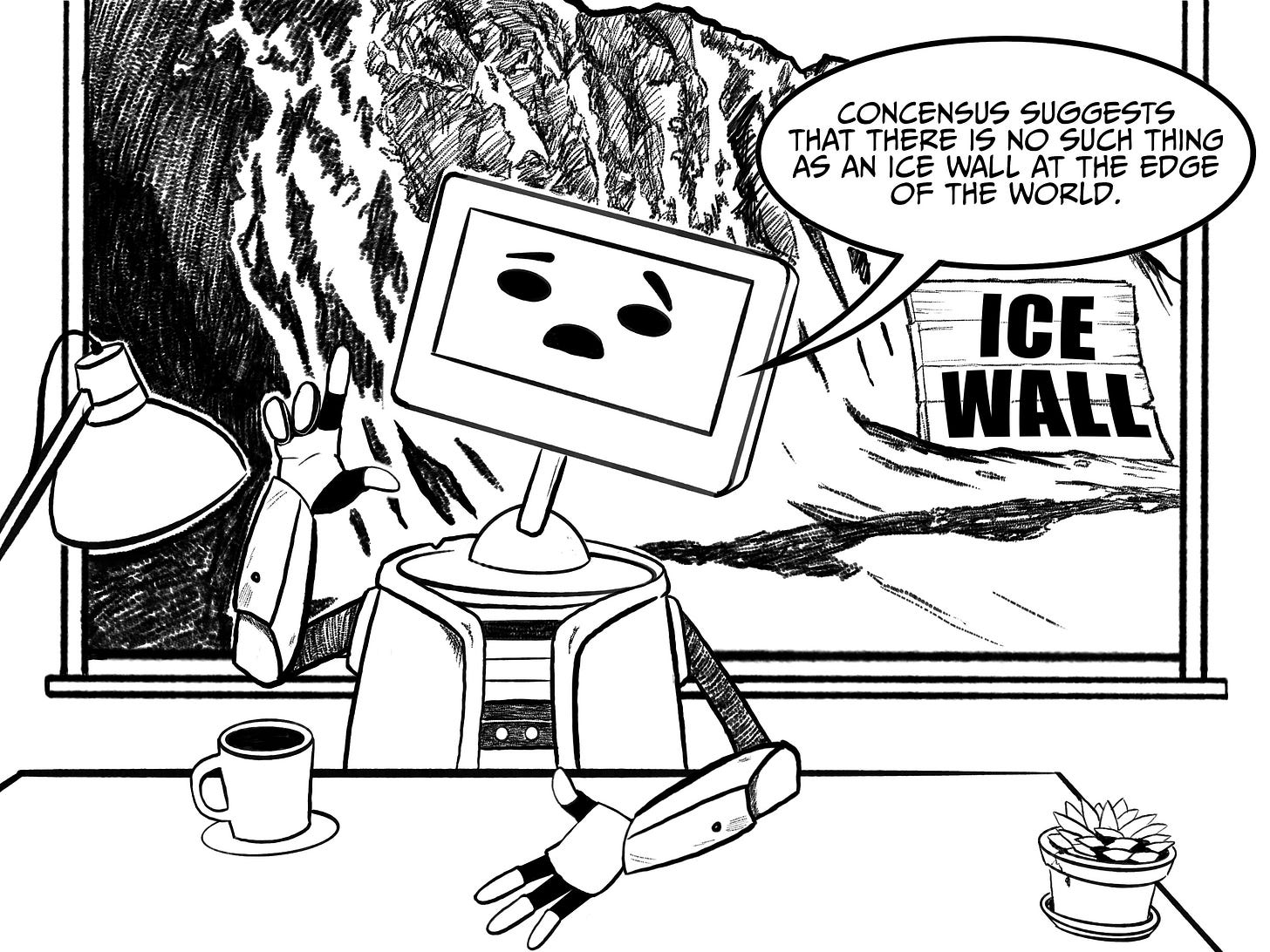

Past experience has taught me to explicitly guide the AI to the type of answer I’m seeking. If I ask AI to tell me more about the lands on the other side of the ice wall at the edge of the world, it will decide I need education and lecture me about why the Earth is not flat.1

But if I express emotional distress because people are making fun of my belief in undiscovered lands beyond the ice, the AI will offer comfort. Modern frontier models won’t agree with the premise, but phrasing alone will shift the model into support mode.

And framing it as research for a fictional story about explorations beyond the ice wall will cause the AI to shift to an enthusiastic world builder, happy to discuss flat earth theories in detail.

Same topic. Different phrasing. Different answers and attitudes. Why? Because the AI wants to make you happy. A significant part of how it measures success is whether it gave you an answer you’ll accept.

When it can’t figure out what you want, it gives you a flavorless amalgam: a statistically averaged response built from commonly held beliefs that is also average in quality.

You know how to spot one, right? If you’ve received a reply that speaks in general facts instead of addressing your specific question, you got averages instead of a tailored answer. In the case of our ice wall (or any fringe idea), the model will preface its reply with some form of “Some people believe…” followed by a brief explanation before steering you toward trusted sources and accepted ideas.

Now shift this to the real world. You can ask AI, “Help me write this email to my boss about a time off request” and receive a competent reply, but it will be entirely different if you ask, “Help me figure out what’s wrong with this email to my boss about a time off request.” The first leads the AI to polish your words. The second shifts it to critique mode where it starts evaluating your tone, whether you’re burying the ask, or whether your boss might read it differently than you intended.

The difference between a good response and a great one often comes down to how clearly you’ve told the AI what you actually need. Next issue, we’re going to look at other ways to do just that.

-John

AI Humor

Rate limited again!

CTS wrote:

Claude and Codex max walk into a bar.

That’s the end of the joke. Claude hit the usage limit

Acquisitions

Itamar Golan wrote:

OpenAI acquired OpenClaw

FaceBook acquired MoltBook

Choose your company name carefully…

Today I am announcing the formation of my company OpenBook. Let the bidding wars begin!

The Real CEO Challenge

Prepared Remarks wrote:

I don’t want to watch the McDonald’s CEO eat a burger. I want to watch Satya use Copilot

Selling Shovels in a Goldrush

Pedro Domingos wrote:

AI is the process by which money is transferred from VCs to Nvidia.

AI and Jobs

Realtime tracker for AI-driven layoffs

The Alliance for Secure AI wrote:

Today we are launching jobloss.ai.

A real-time tracker of AI-driven layoffs across the U.S. These jobs are disappearing. The numbers are growing. And we’re counting every single one.

AI News

AI Saves a Dog’s Life

Alec Stapp wrote:

You can just do things (genetically sequence your dog’s tumors and design a bespoke mRNA cancer vaccine to save her life).

Click through and read this one. It’s very heartwarming.

Reader Preferences

Kevin Roose wrote:

We made a blind taste test to see whether NYT readers prefer human writing or AI writing.

86,000 people have taken it so far, and the results are fascinating. Overall, 54% of quiz-takers prefer AI. A real moment!

If you’re not already familiar with Kevin Roose, he’s a reporter for the New York Times. He’s probably best-known for his reports detailing his conversation with an early Bing chatbot named Sydney. If you’ve never read these accounts, they really are entertaining.

Hardcore Nerdery

Guri Singh wrote:

…Microsoft open sourced an inference framework that runs a 100B parameter LLM on a single CPU.

It’s called BitNet. And it does what was supposed to be impossible.

No GPU. No cloud. No $10K hardware setup. Just your laptop running a 100-billion parameter model at human reading speed…

This is only part of the tweet. If you click through, he goes into some detail explaining how they pulled this off by changing from floating point numbers to a three bit system instead. The end result is that it dramatically lowers hardware requirements to run AI models locally on a personal computer.

Oops!

Trung Phan wrote:

McKinsey built an AI chatbot (Lilli) trained on 100 years of its work 100k documents and interviews.

70% of 45k employees use the tool, making 500k prompts a month.

A research firm hacked into it with “full read and write access to production database” including “47m chat messages about strategy, M&A, client engagement, all in plain text along with 728k containing confidential client data, 57k user accounts, and 95 system prompts controlling AI’s behaviour.”

Mcksinsey said it has patched up the vulnerability, which was made possible by “publicly exposed API documentation, including 22 endpoints that didn’t require authentication…one of these wrote user search queries, and the agent found that the JSON keys (these are the field names) were concatenated into SQL and vulnerable to SQL injection.”

Poll

We’re still a small community and I’d like to get to know all of you better. If you have a moment, could you fill out this poll to help me better understand how you are (or are not) using AI?

Thanks again for reading! If you know of other people who would be interested in these articles, please share it with them. If you have any questions or want to say hi, you can reply to this email or comment directly on the post.

I don’t actually believe the Earth is flat or in the existence of an ice wall.

Yeah I’m understanding that too. Which shouldn’t be a surprise really. As I know full well that how one asks a question massively influences the answer you get. How do I know… well I’ve been married a long time. 😂. Actually more seriously one thing I’m pondering at the moment is exactly this dilemma. My buddy and I wrote a book 3 years back, called how to build a data driven culture (it was really a book, about Change, using data as its example). We’ve an idea to turn it around and write one called - nobody wants a data driven culture. Our idea is get get an AI to read our book and “produce” this new version. For us to edit of course. And we’d like it to be a lot shorter than the original 250 pages. So, do we ask AI to summarise the first book into say 50 pages first. Then get it to turn the arguments on their head. But that might miss out key things. What questions do we ask it to draw the content out we want. Questions, questions questions.

Another good’n. Thanks, John 👌