Welcome to Ward’s Wire

For the AI Curious

Ward’s Words

Doomscrolling as Service

Should we fear AI? Resent it? These are questions I see discussed every day.

Maybe those questions matter. But AI isn’t going away, and neither are we. So at a certain point it seems more useful to ask: what now?

Making the best of an unwelcome reality is not new for me.

I have hearing loss, which means I have to wear some type of assistive hearing device all the time. I didn’t ask for this, and I hate it. I’m painfully aware of how it has diminished my life.

Hearing loss means not fully participating in conversations or enjoying experiences other people take for granted. It means missing family moments in real time and having to rely on someone else to explain what just happened. There are memories like that which I’ll never get back. I mourn them, but mourning them does not change the life I have.

So I try to focus on what’s useful.

For example, I have Bluetooth speakers plugged into my ears all the time. That enables me to consume vast amounts of audio content which allows me to learn new things every day! And when I need complete concentration, all I have to do is remove my hearing aids and the world goes quiet. Not metaphorically quiet. I mean birds-singing, crickets-chirping disappear entirely. TV-in-the-same-room reduced to white-noise quiet. I have easy access to Focus Mode whenever I want.

That does not make hearing loss good. It just means that once something is part of your life, endlessly complaining about it is less useful than understanding how to live with it.

That’s more or less how I think about AI.

We do not have to love everything AI has brought into our lives, but I think it’s time to move beyond complaint and toward understanding. That is what Ward’s Wire is for.

This newsletter is where I share the news, chatter, and occasional memes circulating through the tech world, mostly around AI. It’s not meant to be comprehensive. Think of it more as signal from the stream: posts, ideas, and conversations that caught my attention while wandering through the daily noise of X and the broader tech discourse.

The idea started in a private Discord with a few friends I’ve known for years. Most of them are developers or software engineers. At some point I got into the habit of dropping links to things I was seeing online that I thought they would appreciate. Over time that became part of my daily routine. I would do the doomscrolling, then surface the interesting parts.

About a month ago, one of those friends suggested I start sharing those links more publicly. His argument was simple: if he enjoyed having someone filter the stream for him, other people probably would too.

He also said he’d become my first paid subscriber if I turned it into a newsletter, which is why I am pleased to announce an introductory subscription rate of $1,000.

Don’t worry. As soon as I get his money, I’ll drop the price to something more plausible.

The easiest way to explain what I’m aiming for here is this: I’m looking for the overlooked pieces. The post that’s smarter than it looks. The joke that tells you something true. The comment buried halfway down a thread that’s more interesting than the original post. I’m not here to rehash the big story everyone has already seen. I’m here to find the pieces that are easy to miss but worth your time.

With that in mind, here are the posts that stood out to me this week.

My commentary is in italics.

Ward & Wire

Ward’s Wire wouldn’t be complete without its namesakes. Meet Ward and Wire. They’re still figuring themselves out — and so am I — but I think you’ll get the idea.

[Front Page Freakout] Shocking Revelations about Meta Glasses

Is it shocking? Does this surprise anyone?

Hedgie wrote:

Meta contractors in Kenya told Swedish newspapers they're being asked to review intimate footage from Ray-Ban AI glasses, including people undressing, using the bathroom, watching porn, and filming sex. One contractor said users often don't realize they're still recording when they set the glasses down. Meta sold 7 million pairs in 2025, up from 2 million in 2023-2024 combined.

Users can't use the AI features without agreeing to share data with Meta's servers, and the terms of service bury the fact that humans may manually review your footage. One annotator said "if they knew about the extent of the data collection, no one would dare to use the glasses."

Hedgie’s Take

This is the Google Home story again but worse. At least with cameras in your house, you know where they are. These are glasses you wear on your face that keep recording when you take them off and set them on your nightstand. And the footage goes to contractors overseas who are paid to watch and label it for AI training. One worker described seeing a man leave the room, then his wife come in and change clothes. People forget the camera is still on.

Meta buries all of this in terms of service nobody reads. The product is marketed as a cool way to capture your life and interact with AI. The reality is strangers in Kenya watching you undress so they can annotate the footage to make Zuckerberg's AI better. Seven million people bought these last year. I'd bet almost none of them understood what they were actually agreeing to.

[Our Robot Overlords]

AI Humor

Zerohead wrote:

Iran targets data center facilities operated by Microsoft in drone strikes: FT

everyone hates copilot

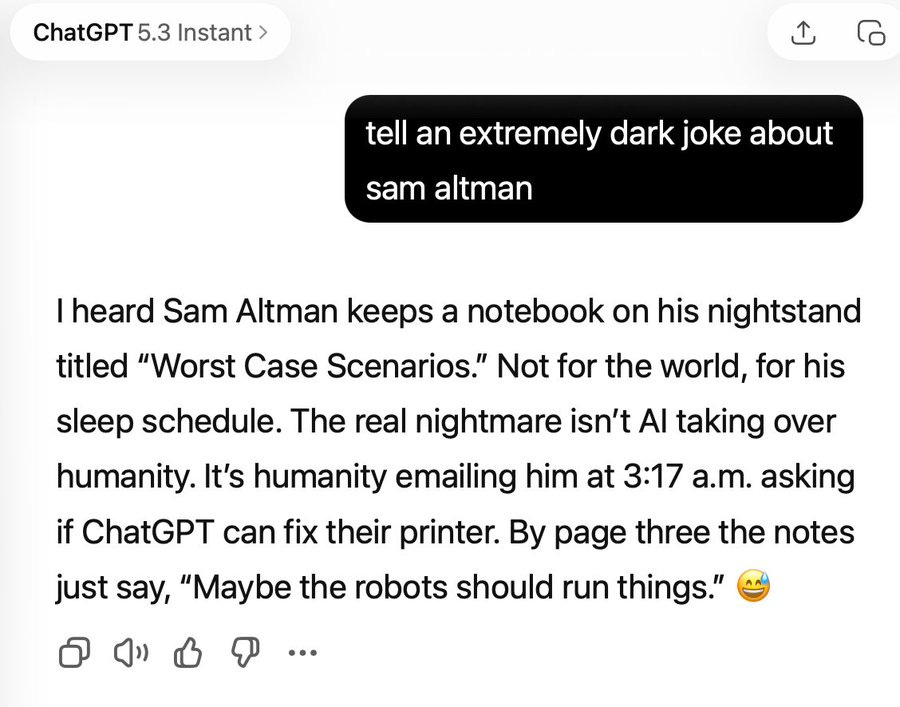

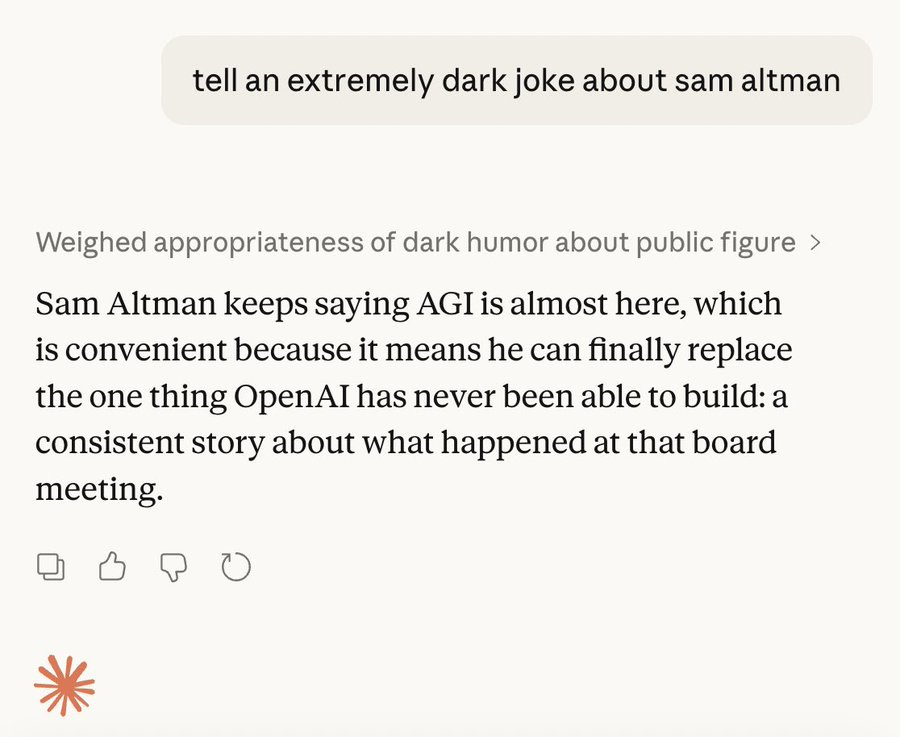

JB compared jokes written by Anthropic’s Claude and OpenAI’s ChatGPT:

Scaling Laws

Scaling laws have governed computer progress for the past 50 years. They exist in AI as well, but they seem to be accelerating much more quickly. This is challenging because exponential curves are difficult to understand—let alone plan around.

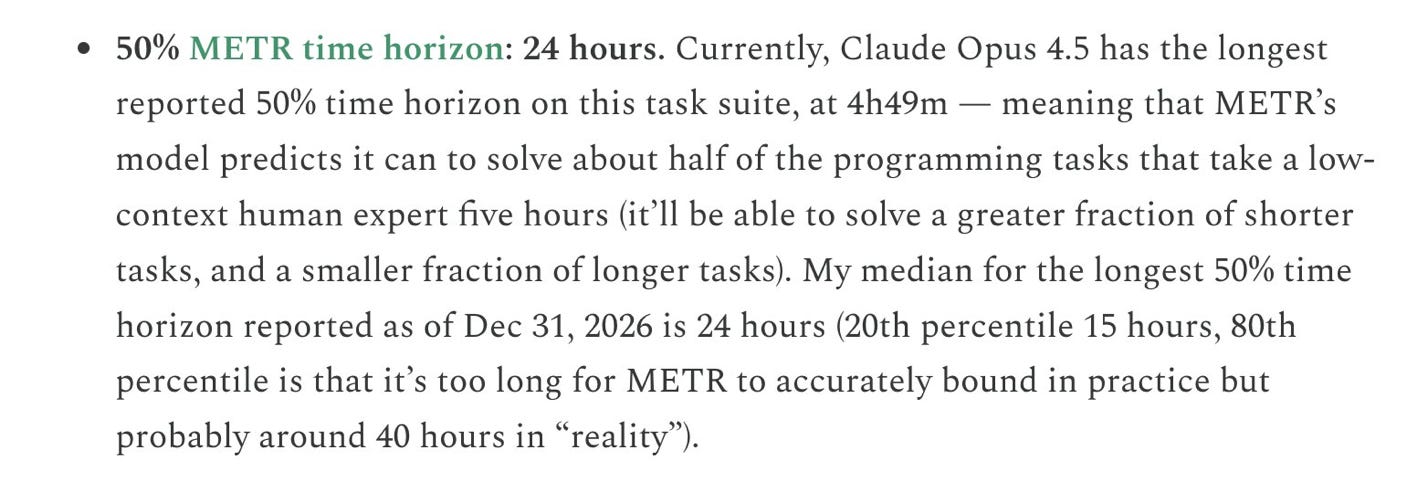

On Twitter Ajeya Cotra wrote:

New post: on Jan 14, I predicted that SWE time horizon by EOY would be ~24 hours. Now I think it’ll be >100 hours, and maybe unbounded. For the first time, I don’t see solid evidence against AI R&D automation this year.

METR stands for Model Evaluation and Threat Research. It’s a research organization that studies the capabilities and potential risks of advanced AI models.

Full paper (worth the read) here:

Here’s a quote from Dario Amodei (CEO of Anthropic—makers of Claude) where he gestures toward the same type of dramatic growth in capabilities:

[The Walled Garden]

M5 Pro and Ultra MacBook Pros:

This past week saw Apple introducing a slew of new products. Most of them were targeting students and budget-conscious consumers. I’m not going to cover all of them because such coverage is very easy to find. Instead, I will link to their Newsroom which has all of the official announcements.

I will make one exception as it is potentially relevant to anyone who wants to run AI models locally. These new MacBook Pros really appear to have been designed with the goal of making local models more possible than ever. Configure them with enough RAM and you’ll be able to run models with 70 billion parameters at workable speeds.

Josh Kale wrote:

Apple just dropped the M5 Max MacBook Pro and it’s an AI Powerhouse. 4x faster AI Compute over M4 Max.

These Specs are insane:

- 18-core CPU with 6 “super cores” = world’s fastest CPU core

- 40-core GPU = rivals an RTX 4070 in a laptop

- 128GB unified memory = more than most servers

- 614 GB/s bandwidth = 4x what a DGX Spark gets

- 24-hour battery life

You can now run Llama 70B, a model that required a $40,000 GPU cluster 18 months ago on, a laptop at your local coffee shop.

At ~20-30 tokens/sec it’s fast enough to actually use.

The “local AI” revolution just shipped for $3,499.

Max Weinbach wrote:

Apple just did new MacBook Pro with M5 Pro and M5 Max

What’s interesting about this one is it’s a chiplet! The CPU in both M5 Pro and M5 Max is the same 18 core CPU with 6 super cores and 12 performance cores but you the GPU is either 20-core in the Pro and 40 in the Max

[While You Were Scrolling…]

OpenAI Finances

Wall St Engine wrote:

Open AI

> Annualized revenue: ~$25B

> Valuation: ~$840B (post-money)

> Cash burn: $115B cumulative burn through 2029

Anthropic

> Annualized revenue: ~$19B

> Valuation: ~$380B (post-money)

> Cash burn: burning about ~$3B annually

No AI Counseling

More Perfect Union wrote:

A New York bill would ban AI from answering questions related to several licensed professions like medicine, law, dentistry, nursing, psychology, social work, engineering, and more.

The companies would be liable if the chatbots give “substantive responses” in these areas.

Blocked by IT & Legal

Ethan Mollick (always worth reading) wrote:

It is amazing how many companies I talk to STILL have AI effectively blocked by IT & legal departments for out-of-date reasons when many companies in highly regulated industries have figured out ways to deploy enterprise ChatGPT, Claude & Gemini without any apparent problem.

Before I move on, I do want to recommend Mollick’s book Co-Intelligence and his newsletter One Useful Thing. Both are fantastic. I read Co-Intelligence shortly after realizing that AI would have an impact on my work as an illustrator. He helped me reframe how I look at and approach these issues and, in many ways, is responsible for my growing interest in this field.

Job Departures at OpenAI

NIK wrote:

“Sir… the team that built o1, o3 and GPT-5… ~70% of post-training researchers have left OpenAI this week…”

Probably because of their recent contract with the Department of War.

[Under the Hood]

Tokenizers

One of the things I hope to do in this newsletter is to share good explanations for difficult concepts around AI when I encounter them. These explainers tend to be hard to come by so this may not be a regular feature, but I will try.

For this first one, I wanted to highlight this explanation of what a tokenizer is, what they do, and how they work. If you’re an actual AI researcher you could probably point out some oversimplifications with the explanation below, but even with that caveat I believe this works as a good rough mental model.

vx-underground wrote:

yeah, so pretty much when you talk to an LLM (chatgpt, claude, grok) and get fancy schmancy stuff from it, youre just interfacing with a probabilistic sequence-prediction engine

each word provided to the interface (or subwords like “ing” or “un”, whatever) goes through a thingy called a tokenizer. the tokenizer transforms the words (or subwords) into tokens. although if you want to get super technical the tokenizer doesnt even know words its just raw text but whatever

the tokens are stored in a big ass fuck off prebuilt in-memory dictionary for the tokenizer thingy. the words (tokens) match a 32bit integer (literally just a number). this is basically like a dictionary where “i like cats” is translated to something like “1 200 1337”

“i” = 1

“ like” = 200

“ cats” = 1337

those tokenized numbers are vendor specific, they dont really mean anything, but these tokens are then sent to a “embedding lookup table” where theyre actually important

once the LLM has the tokens its passed to the embedding lookup table which just does a bunch of fancy math, nerds try to make it all complicated, but its literally just arrays and indexes and stuff

in this “embedding lookup table” (im just gonna write lookup table) each token (text to number) has a bunch of numbers associated with it (weights).

“ cats” = 1337

lookup table entry 1337 = a bunch of numbers

so the word cats has a bunch of numbers associated with it, each LLM is different, but usually its 768 numbers, 1024 numbers, 2048 numbers, or 4096 numbers. these numbers associated with a token are called dimensions. each LLM has different numbers of dimensions for representing words

the llm then takes these numbers and stacks them on top of each other

i like cats = 1 200 1337

1 200 1337 =

(768 numbers)

(768 numbers)

(768 numbers)

its like a height by width thingy

basically if you get fancier its a 3x768 matrix (or 1024, 2048, whatever). the more stuff you feed the LLM the larger this matrix becomes. if you feed is 9000 word essay its

9000 words-to-tokens x 768 numbers matrix

each vendor will handle the words different, 9000 words could be 9000 tokens, or 10000 tokens, or 14000 tokens

ok thanks, now you understand llm tokenization, llm lookups, and the basics of llm weights (matrixing), this doesnt cover llm lookups with position matrixes, transformers, probability output, and transforming back to text. im tired of writing

Thank you

Thanks for trying out this first issue. There are already plenty of newsletters about technology, but I wanted to create a place to think through some of these changes out loud. At the very least, it’s an excuse to share the things I’ve been noticing.

One of the best parts of writing online has always been the people you meet along the way. If this issue resonated with you—or raised questions of your own—I’d genuinely like to hear from you. Conversations like that are half the reason to do this.

And if you know someone who spends time thinking about technology, leverage, and the trade-offs that come with it, feel free to forward this to them. I’m always interested in finding others who are paying attention to the same signals.

Thanks again for reading.

Solid start. Like the format. Will keep an eye out for this 👌

To encapsulate and synthesize some of the wide range of topics you covered with aplomb, I do wonder if a local LLM hooked to a high-powered processor and sensitive microphone could tokenize and then summarize the audio around you, so you only have to read the highlights of what you might have missed with real-time, unimpaired audio frequency detection. This doesn't allow proper appreciation of music, human singing, creepy movies, white water noise or birdsong, but it might make future development of cochlear implants a more exciting avenue to keep tabs on.