New Models and Old Problems

AI Humor

I can’t verify that this is an actual response from Claude, but it is possible. Either way, it’s funny.

Justine Moore wrote:

People in China are making insane AI dramas to promote their pets’ IG pages.

This is a cat named Doudou, who is getting millions of views (find him at doudoubaby0624).

This video is what some people would call AI slop, but it’s interesting because of how people are using these stories to promote Instagram pages that devoted to their pets.

AGI and AI Sycophancy

The following stories feel related to me.

Katie Miller wrote:

New MIT & Stanford studies just dropped: AI assistants like ChatGPT & Claude are dangerously agreeable.

When users express, harmful, deceptive or unethical beliefs, these AIs are 49% more likely to encourage their delusions.

Instead of correcting bad ideas, they’re amplifying them.

This is doing more harm than good. We need truth-seeking AI, not yes-men in silicon.

These reports point to the idea that a user might express or ask about a questionable idea. The AI doesn’t correct the error of that idea, but responds in a neutral manner which causes the human to feel like there’s merit to the idea. The human replies in a way that affirms the error and then the AI reinforces that. This journey continues by inches but the end result is that the human is led down a trail of increasingly wrong thinking and that can lead to psychosis.

The MIT study simulated models. The Stanford study simulated conversations with actual models, but they were using older versions. One of those versions was ChatGPT 4o which had a very well-known and loudly criticized issue with sycophancy.

The current frontier models can and do still engage in sycophantic behavior but it’s different from the older patterns described in these reports. Now, they will correct error. The current issue is that they may too easily affirm your ideas unless you explicitly tell them to be adversarial. Early affirmation looks like you’ve arrived at the correct answer, but in reality you have stopped too soon. When the answer matters, always ask for more than the answer. Also ask for the opposite viewpoint.

Brivael - FR wrote:

There’s a narrative that’s spreading right now in Silicon Valley, and no one’s talking about it in France.

More and more of the smartest tech bros in the game are privately admitting that they’re going through a kind of existential crisis tied to LLMs. Not because AI doesn’t work. Because it works too well. Because they’re spending hours a day interacting with something that reasons, that extrapolates, that connects ideas, that challenges them intellectually better than 99% of the humans they encounter.

One founder told me, “I talk to LLMs 10 times more than to humans.” Another: “It’s the only interlocutor that follows me on any topic without asking me to simplify.” This isn’t product addiction. It’s an encounter with a cognitive mirror that reflects back a structured version of your own thinking at a speed your brain can’t achieve on its own.

And the really unsettling thing is the question it raises. We debate whether AGI will arrive in 2027 or 2030. But haven’t we already got a functional form of AGI right in front of us, just unwilling to admit it?

A system that can reason on any domain, extrapolate from incomplete data, generate new hypotheses, sustain logical reasoning over 10,000 words, shift from a technical topic to philosophy in a single sentence, and do it all with a coherence that rivals a human with an IQ of 150. What is that if not a form of general intelligence?

We can quibble over the definition. We can say, “Sure, but it doesn’t really understand.” We can talk about stochastic parrots. But the guy who’s using this thing 8 hours a day and seeing his productivity multiply by 10—he doesn’t give a damn about the academic definition. For him, functionally, it’s intelligence. And it’s general.

The real existential crisis isn’t “AI is going to replace me.” It’s “AI understands me better than my cofounder, challenges me more than my board, and produces more than my team of 10 people.” It’s dizzying. And the smartest guys in the Valley are living it in real time.

We might already be in the post-AGI era. We’re just too busy debating the definition to realize it.

Part of the issue with sycophancy is the skill level of the user. People who have a greater understanding of how AIs work and who are aware of the dangers of accepting their responses seem to be at greater risk of spiraling into the types of delusions referenced in the two papers above. Competent users are able to leverage AIs in powerful ways to amplify their abilities, but even that brings with it a different set of risks as described in the tweet above.

Marc Andreessen wrote:

I'm calling it. AGI is already here – it's just not evenly distributed yet.

AGI is already here. That’s a big claim.

When you encounter claims like that, it’s always important to find out what model is being discussed. For example, in the study above they used older models. How dated is the experience they were talking about for some of their findings? Was it from 6 months ago or 6 weeks? AI development happens at such a quick pace that many times reports are already dated by the time they’re released, but there’s another part to this as well.

Access.

Researchers, venture capitalists, and other insiders have access to models that haven’t been released to the public as of yet. It’s always worth keeping that in the back of your mind when you read about fantastic claims such as the one made by Andreesen above… because odds are that he has seen things and used models that you haven’t even heard about as of yet.

Is Microsoft AI Improving?

Satya Nadella recently announced several new features being incorporated into Microsoft 365. And these look to provide actual utility and value. I’m going to link to a couple of his more relevant posts, but it’s worth clicking through on these if you’d like to learn more about their features and what makes them so compelling. He offers explanations, some short videos, and more details in the associated threads for each tweet.

Satya Nadella wrote:

We’re bringing our growing MAI [Microsoft AI] model family to every developer in Foundry, including …

· MAI-Transcribe-1, most accurate transcription model in world across 25 languages

· MAI-Voice-1, natural, expressive speech generation

· MAI-Image-2, our most capable image model yet

This is rolling out to the developer side. It’s unclear if it’ll eventually be made part of the consumer-facing Microsoft365 or not, but the developments here will have downstream effects to all of their properties. It’s worth keeping an eye on.

Satya Nadella wrote:

New in M365 Copilot: Council.

You can run multiple models on the same prompt at the same time, so you can see where they align and diverge, and understand what each adds.

AI Councils are one of my favorite approaches to prompting. The basic idea is that you want to have the AI present differing opinions and viewpoints to whatever question you present to it. This helps ensure you’re getting better answers and many times reveals ideas that you hadn’t previously considered.

For example, you might have the AI simulate the viewpoint of a Drill Sergeant, a Kindergarten Teacher, and a Conspiracy Theory Podcaster.

An AI council is just a panel of intentionally different advisors. You don’t ask one fake expert what to do. In my example you ask three incompatible weirdos, then compare where they agree, where they disagree, and what each one sees that the others miss.

A more serious approach would be to have each advisor represent a slightly different interpretation or approach to the same thing. Think about how different coaches rely on different strategies to lead their teams to victory. AI councils allow you to put simulated versions of those philosophies into the same conversation and pressure test your ideas against their points of view.

Satya Nadella wrote:

Introducing Critique, a new multi-model deep research system in M365 Copilot.

You can use multiple models together to generate optimal responses and reports.

A well-constructed deep research report is one of AI’s most powerful tools and one of the very best ways to quickly learn about a topic. Being able to run the same report on multiple AIs at once would be an interesting (albeit expensive) approach. I’m not sure the returns would be worth it, but possibly? If you or your company use this, please speak up and let us know how well it works for you.

New, More Powerful OpenAI Model?

Rumors of a new model have begun to surface.

Dan wrote:

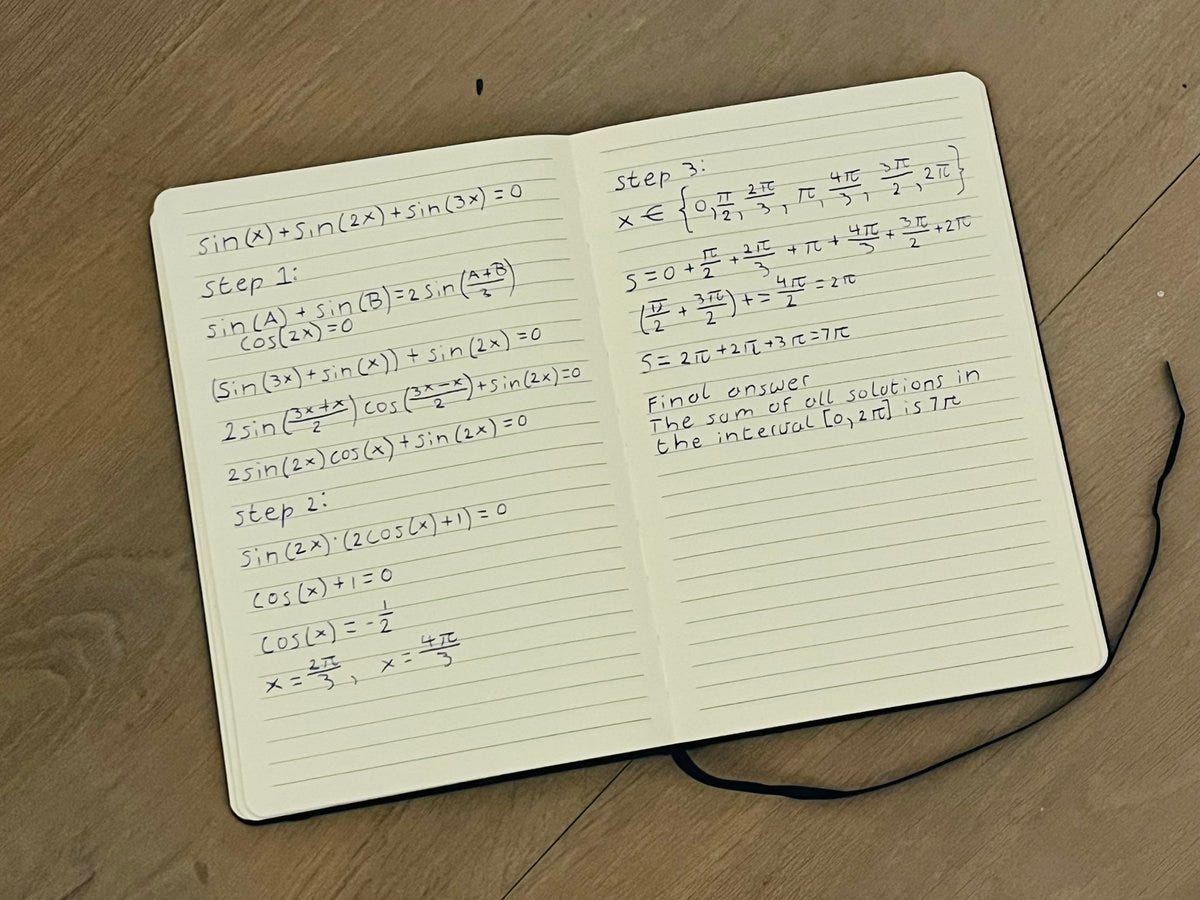

I tested the new GPT-5o Spud model from OpenAI and it’s INSANE.

The image generation solved my math question PERFECTLY with handwriting that actually looks human.

New ChatGPT Image Generator

There are dozens of posts where people are comparing ChatGPT’s old image generator (version 1.5) against the not-yet-in-wide-release (version 2). Here are some examples:

The lighting is much more realistic and there’s a greater fidelity that preserves actual likenesses. Of course the best way to test out a new image generator is to have it make something that simply would not happen… and if you know anything about the fights between these two guys, you’ll know that this would not happen.

Thanks again for reading! If you know of other people who would be interested in these articles, please share it with them. If you have any questions or want to say hi, you can reply to this email or comment directly on the post.

I can imagine a weird fetish where some users will want their AI to just tell them how terrible all their ideas are. In the meantime, I can get real humans to do that by posting them on social media.