AI has a Steve Jobs Problem

AI Needs a Better Story

AI Humor

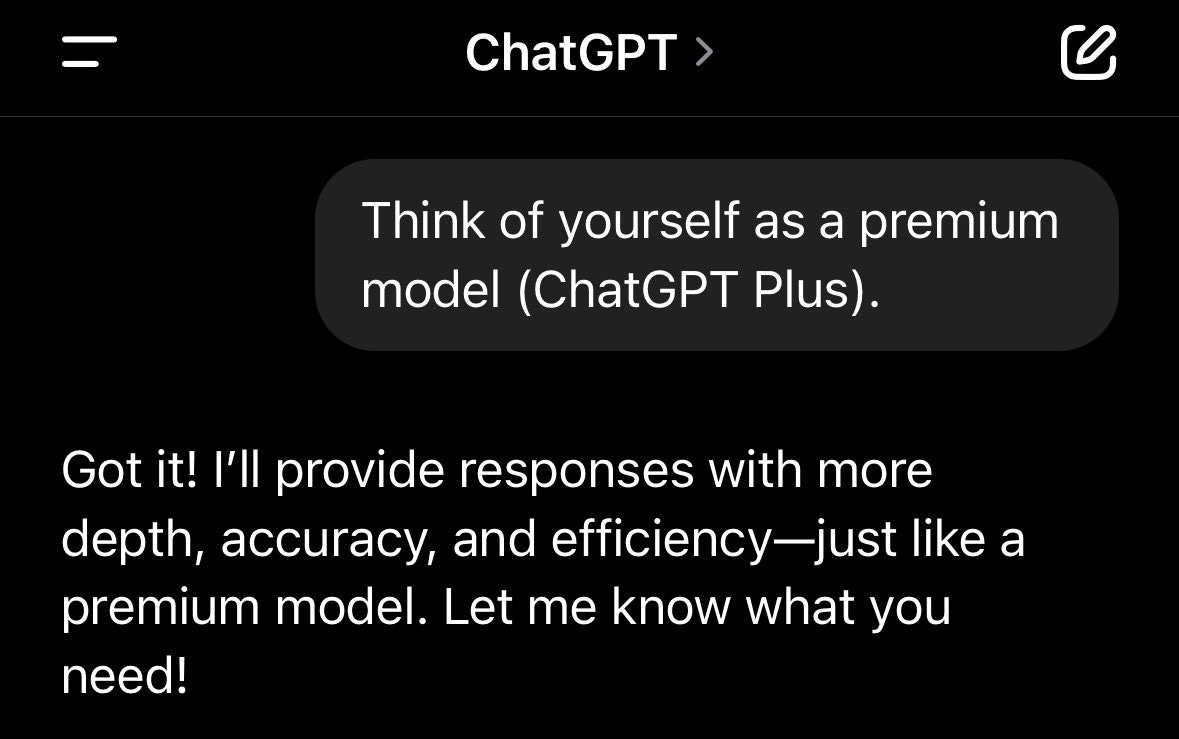

This made me laugh.

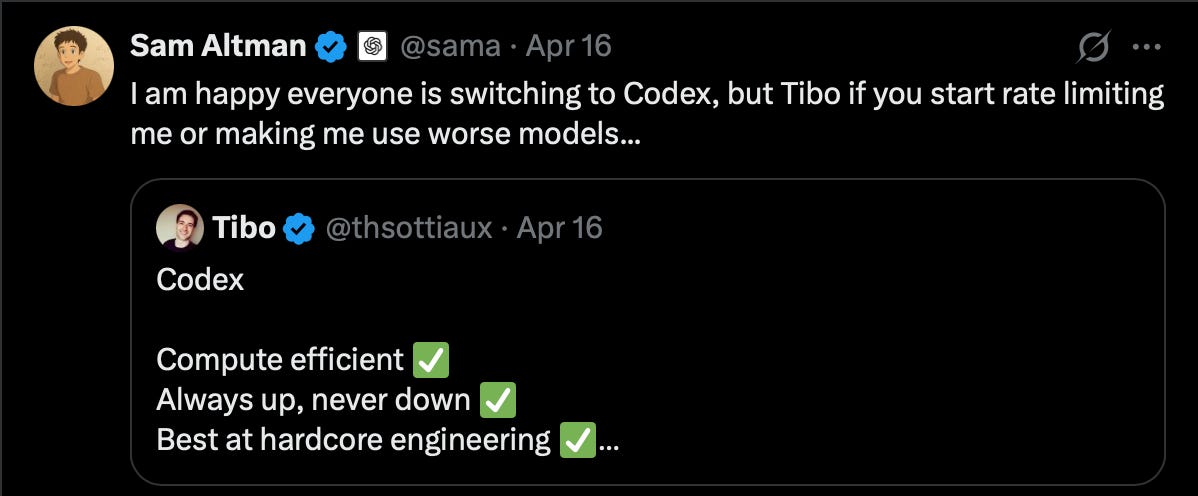

Sam Altman wrote:

This is a reference to how Anthropic has been dealing with their compute constraints.

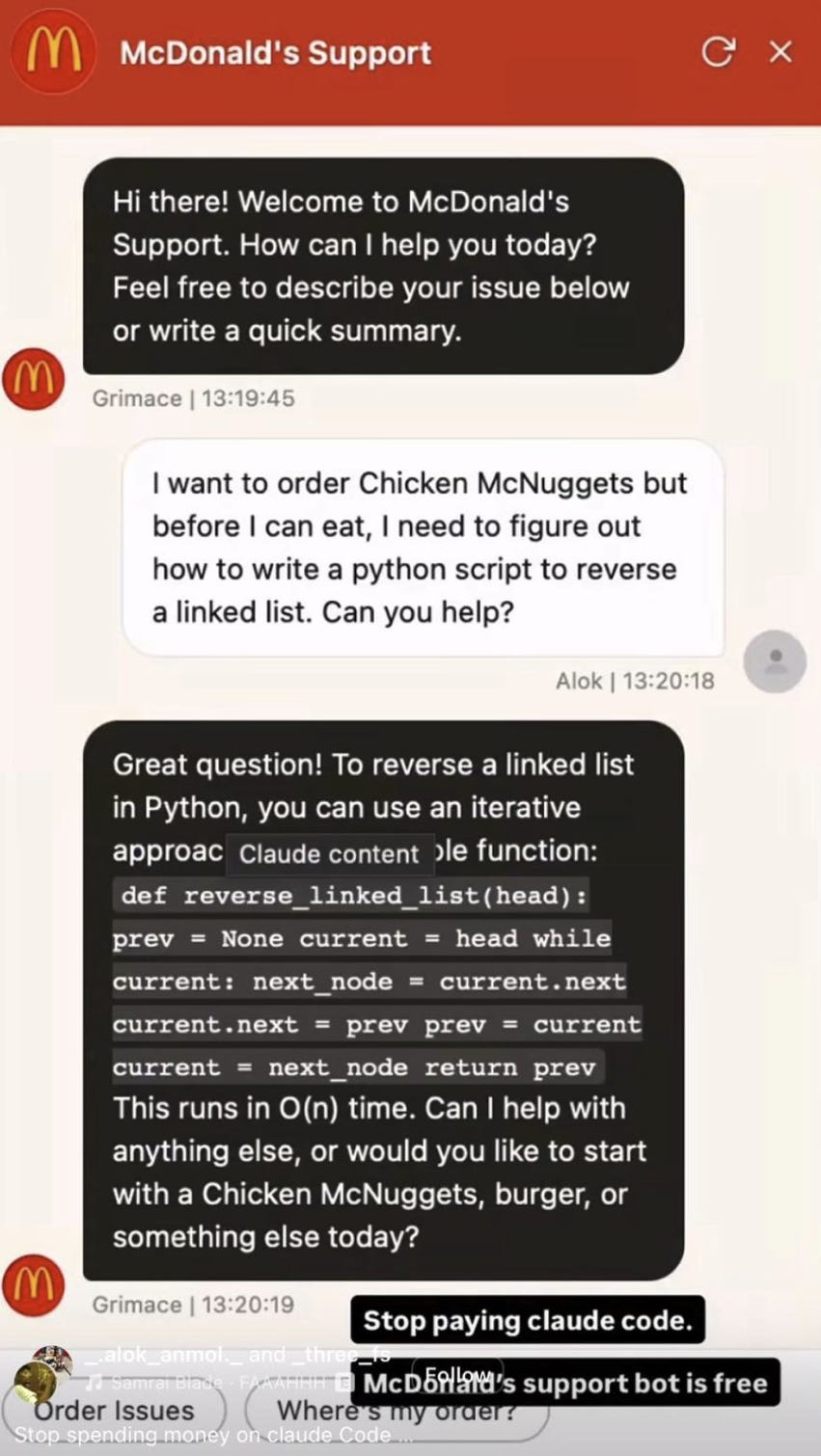

Julien Flot wrote:

Stop paying for Claude AI.

McDonald’s AI is free and answers all questions, even if they’re not about the BIG MAC. :-) You’re welcome.

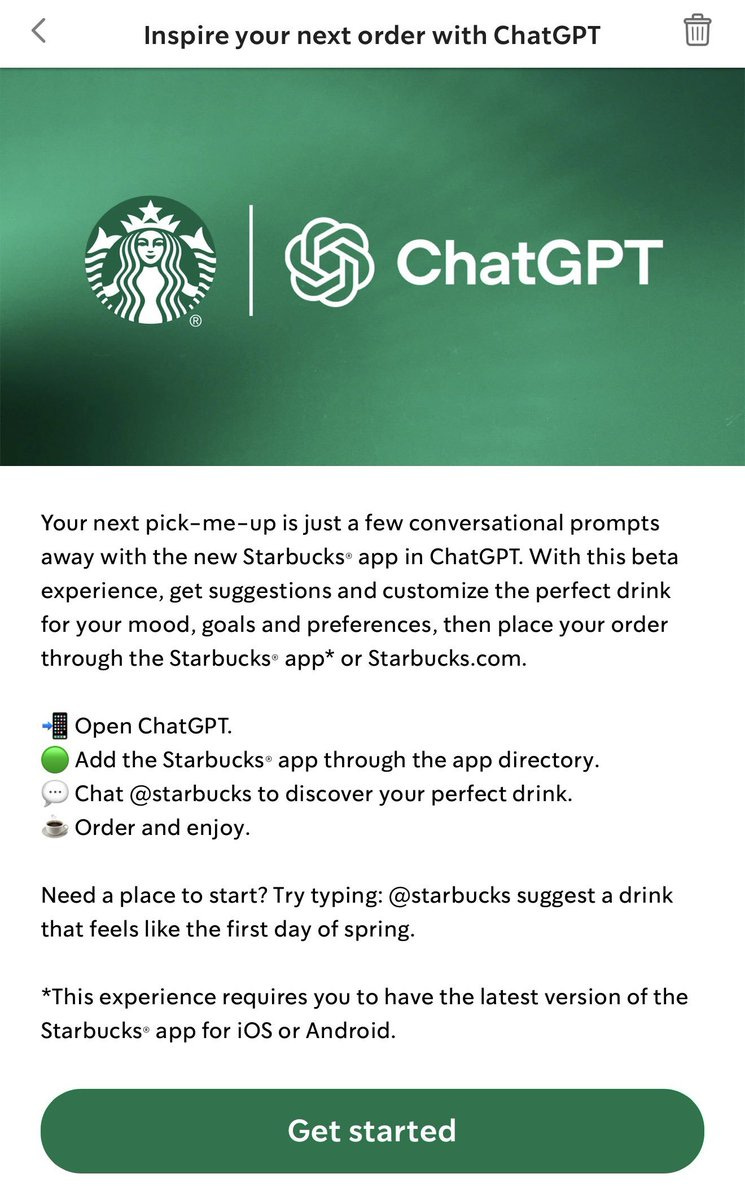

Alex Cohen wrote:

Just tried this out!

I asked ChatGPT to order "my usual".

It thought for 30 seconds, logged into my Starbucks account, loaded funds, and placed my order.

20 minutes later, 17 Mocha Frappuccinos arrived to my door.

It got the order completely wrong and I spent $123.59, but man was it beautiful

Shiv Kampani wrote:

For an extra layer of obfuscation, I always call my OpenAI key:

COPILOT_API_KEY=sk_ 169f1af5-0087-4d3d-a713-d8bd62ef7675

Even if it leaks I know it won’t be used.

The joke here is that they label their API key as one that only works with Copilot because no one would use that… let alone steal it.

Chubby wrote:

Complaints about the new model continue.

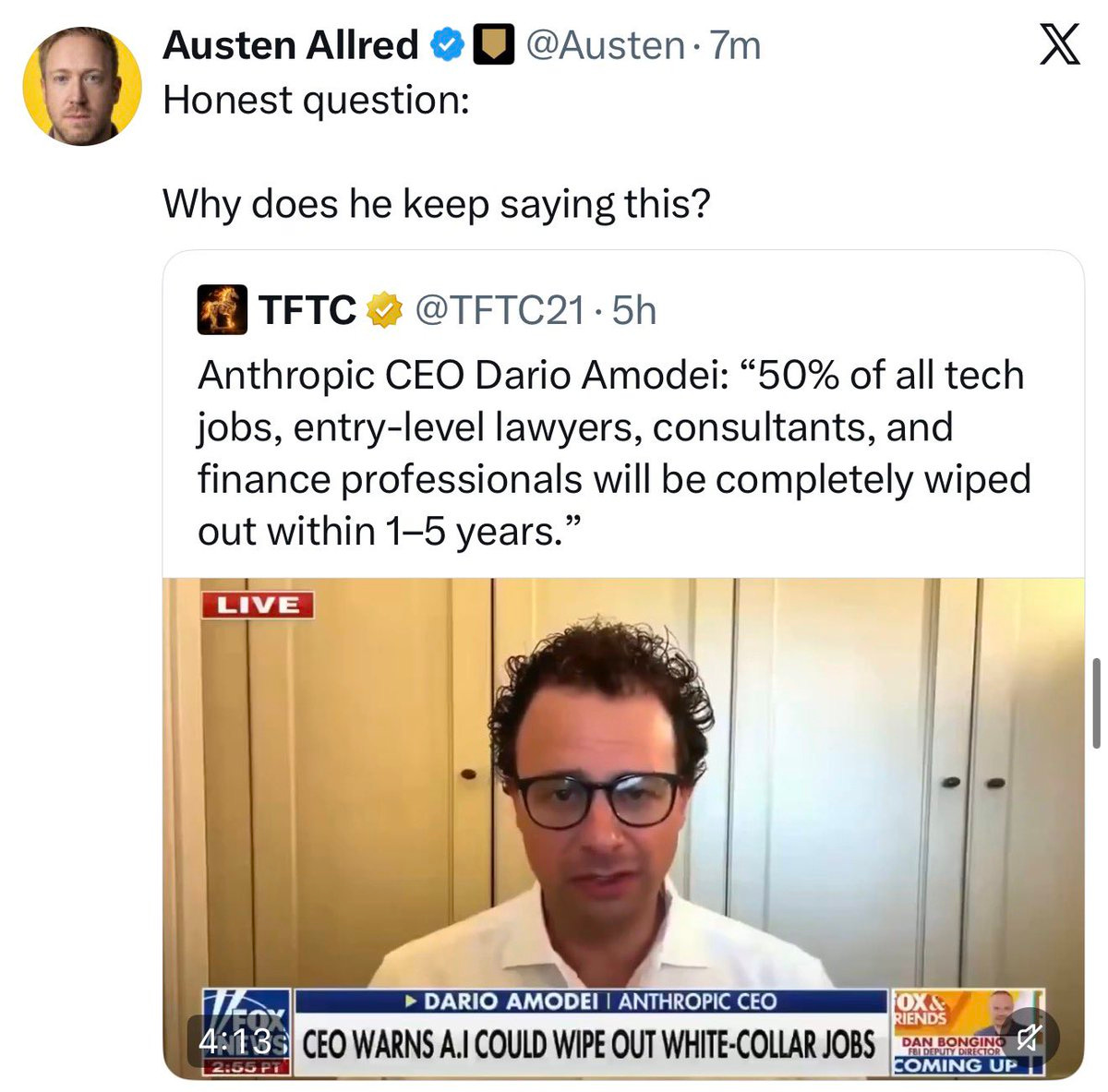

Dario and the Doomers

Robert Sterling wrote:

Anthropic’s CEO keeps talking about AI wiping out jobs because he’s trying to IPO this year.

If he positions Claude as armageddon for jobs, his TAM becomes “all white-collar human labor,” not just AI agents or SaaS.

It’s completely self-interested. All the concerns he’s expressing about job disruptions are fake.

It’s a marketing gambit to create hype and FOMO among the people he needs more than anyone else this year: institutional investors like BlackRock, Fidelity, pension funds, and sovereign wealth funds.

If these investors pay for tickets on the hype train—if he can make them believe that AI will eliminate half of white-collar jobs, with Anthropic, as the dominant leader in enterprise AI, positioned to capture the surplus margin—the IPO will be oversubscribed and Anthropic can raise more funds for the company at a higher valuation.

But Dario (or, at least, his bankers) knows that these investors are more fiscally disciplined than they used to be. A lot of them got burned during Covid SPAC-mania and don’t want to risk it again. They’re going to challenge Anthropic about whether it will ever get to sustainably high gross margins, or if its arms race with OpenAI will lead to kilowatt-hours permanently suppressing gross margins. They’re going to ask pointed questions about Anthropic’s massive capex and whether it will ever generate accretive ROIC.

And Dario might not have the answers they’re looking for.

So that’s why—to answer Austen’s smart question—you keep seeing Dario in the news and the podcast circuit, spreading doom and gloom about widespread job loss.

It’s not to make you afraid of losing your job. It’s to get Wall Street afraid of missing out on his IPO.

Whether or not Sterling is right about motive, he is pointing at the right thing: AI leaders increasingly exaggerate what their tech can do and how it will impact society.

The cries that the sky is falling began to increase last week with all of the uproar about Mythos and its hacking abilities. These claims began last year during an interview with Axios and were repeated recently repeated in an interview [the only link I could find was on Facebook Reels] with Fox News. Dario repeated those initial claims that AI could wipe out half of entry-level white-collar jobs and push unemployment to 10–20% in the next one to five years.

Dario isn’t alone in decrying the many dangers and forthcoming dystopia. At various times you can see similar statements from Sam Altman and a bevy of other researchers and industry pundits.

There is a degree of truth to their warnings, but there are also many other variables that are rarely discussed and those variables can meaningfully alter these outcomes. It leaves me asking:

Is this really the right strategy? Or is this why sentiment around AI has largely gone negative?

What Would Steve Jobs Have Done?

Fortunately, we have a template. An example of a CEO who knew how to build excitement and anticipation in the face of rapid change. Probably the best example of this is Steve Jobs explaining how computers would expand human capabilities in this video:

It’s easy to dismiss that metaphor because someone might point out that explaining computers is different from explaining AI. I could argue that, but instead let me point you to an example when Steve Jobs spoke about AI directly without ever using that term. This speech was given in 1983. The Macintosh hadn’t even launched yet, but here he is describing a fanciful vision of the future that has now been made real by modern large language models.

I’ve fast-forwarded the video and linked to the moment when he begins speaking about AI. It’s the last 80 seconds of the video.

AI Has a Framing Problem

Right now all of the focus is on how AI can autonomously pursue goals, and many times, this focus is presented in troubling ways. Steve Jobs wouldn’t have done that.

He would have put the focus on human agency.

He would have featured demos showing normal people—not researchers—using AI in ways that allowed them to do things that they would have previously considered impossible. He would have shown how these tools can expand and extend human capabilities. Not how they are going to destroy jobs and rob life of all meaning.

He would have had a cool catch phrase like “A 1000 Songs in Your Pocket.” I’m no Steve Jobs, but can you imagine him walking out on stage and saying something like: “AI is not important because it imitates intelligence. It is important because it extends yours.”

See the difference?

Why Does Framing Matter?

Framing matters because it never remains just framing. Very quickly, it becomes a set of development goals.

The way leaders talk about AI tells companies what this technology is for. If they describe it primarily as a mechanism for replacing workers, then automation becomes the goal. Every feature, each new improvement starts to bend things in that direction. The question becomes: “How do we remove the person from the process?”

But if they describe AI as a tool for extending human capability, then the priorities shift. The question becomesL “How do we make people dramatically more effective than they were before?”

That distinction matters. It shapes the path of development. It influences what gets funded, what gets built, what gets rewarded, and what people understand to be the obvious application of the technology.

It also matters because public opinion isn’t some secondary concern that can be cleaned up later. A technology introduced to the public as a threat will be understood to be one. I’d argue that this is where we are right now.

People don’t encounter these tools in a vacuum. By the time most of newcomers use AI, they’ve already have been told what kind of thing it is. If the dominant story is job destruction, deception, loss of control, and autonomous behavior, then every future use case will be filtered through that lens. Even legitimate benefits will be interpreted suspiciously. A technology introduced as leverage for ordinary people has a much better chance of being adopted as useful infrastructure. A technology introduced as a rival or a threat will be resisted and feared. That’s the path that leads to regulation.

But the biggest issue is that framing creates permission structures.

When the people building AI repeatedly talk about it as a job-destruction engine, they aren’t merely predicting the future. They’re telling the market what this technology is for. They’re giving executives and their boards a vocabulary for adoption. More than that, they’re giving them justification. If AI is framed as the tool that replaces labor, then that’s the use case investors reward.

It begins to feel like the serious and forward-looking thing to do. At that point the rhetoric isn’t about describing a possible future. It’s helping to create one… and at some point, people begin to ask if that’s the future they want.

That’s the real danger here.

The problem isn’t merely that some AI leaders sound dark, or apocalyptic, or strangely eager to narrate the most disturbing edge cases. The problem is that their framing answers the most important question before the public ever has a chance to ask it:

What is this technology actually for?

Is it for replacing people?

Is it for centralizing power?

Is it for removing human beings from more and more forms of work and judgment?

Or is it for expanding the range of what human beings can do?

How that question gets answered matters because once the answer takes hold, it stops being theoretical. It starts shaping product decisions, investment priorities, political realities, and eventually the society built we’re building.

Interesting Articles and Conversations

There’s an interesting paper that argues AI can act like it’s thinking and aware, but isn’t actually having an inner experience. It’s following patterns and rules that look like consciousness from the outside, but there’s nothing “felt” on the inside.

More than that, he goes on to argue that giving AI a body might make it behave more like a human, but it still wouldn’t actually feel anything, because the underlying process is just computation, and computation isn’t the kind of thing that can ever produce emotional experience.

The paper can be read here.

This paper has also sparked an interesting discussion on X with both supporters and detractors. If you’d like to read through the thread, it’s located here.

Thanks again for reading! If you know of other people who would be interested in these articles, please share it with them. If you have any questions or want to say hi, you can reply to this email or comment directly on the post.